AI Reporting Assistant

Generates standardized incident reports from free text, capturing what happened, when/where, who was involved, why it occurred, and actions taken.

Data creation: generate a data set of reports to use them as ground truth (reference) to train the model. This was made by automating prompts through an API.

Model selection and experimentation plan : Test different Hugging Face models to evaluate its performance. Play with the generation parameters (temperature, top_k, top_p, presence_penalty, etc) and compare the results

Evaluation metrics : Text-to-text comparison, through tokenization and the attention mechanism: Bert score, bleu/rouge score, cross encoder similarity...

Model training : Using QLoRa and a SFT strategy on a small model (less than 1 billion parameters) in order to specialize it in the reporting task

Web application interface to ease accesibility and the report download

OpenClaw Assistant

I undertook the research and installation of OpenClaw, a versatile AI assistant managed through Telegram. This project showcases the deployment of OpenClaw on an AWS EC2 instance, integrating it with various AI and ML skills to function as a personalized assistant, controlled via direct Telegram commands.

Deployment & Setup : Installed OpenClaw on an AWS EC2 instance, configured for optimal performance and security, including essential skills like clawdhub, goplaces, nano-3banana-pro, and nano-pdf.

Telegram Integration : Established seamless communication and control through Telegram, enabling personalized interaction and task management via bot commands.

Skill Configuration : Integrated key AI/ML skills for image generation (Gemini 3 Pro), Google Places search, PDF manipulation, and core functionalities, all managed through the bot's interface.

Personalization & Security : Configured OpenClaw with custom duties and security measures, including protection against injection attacks, to act as a reliable personal assistant.

GitHub Integration : Managed repository updates via a dedicated GitHub account (ZbottaBot), demonstrating the ability to edit, commit, and push portfolio content directly.

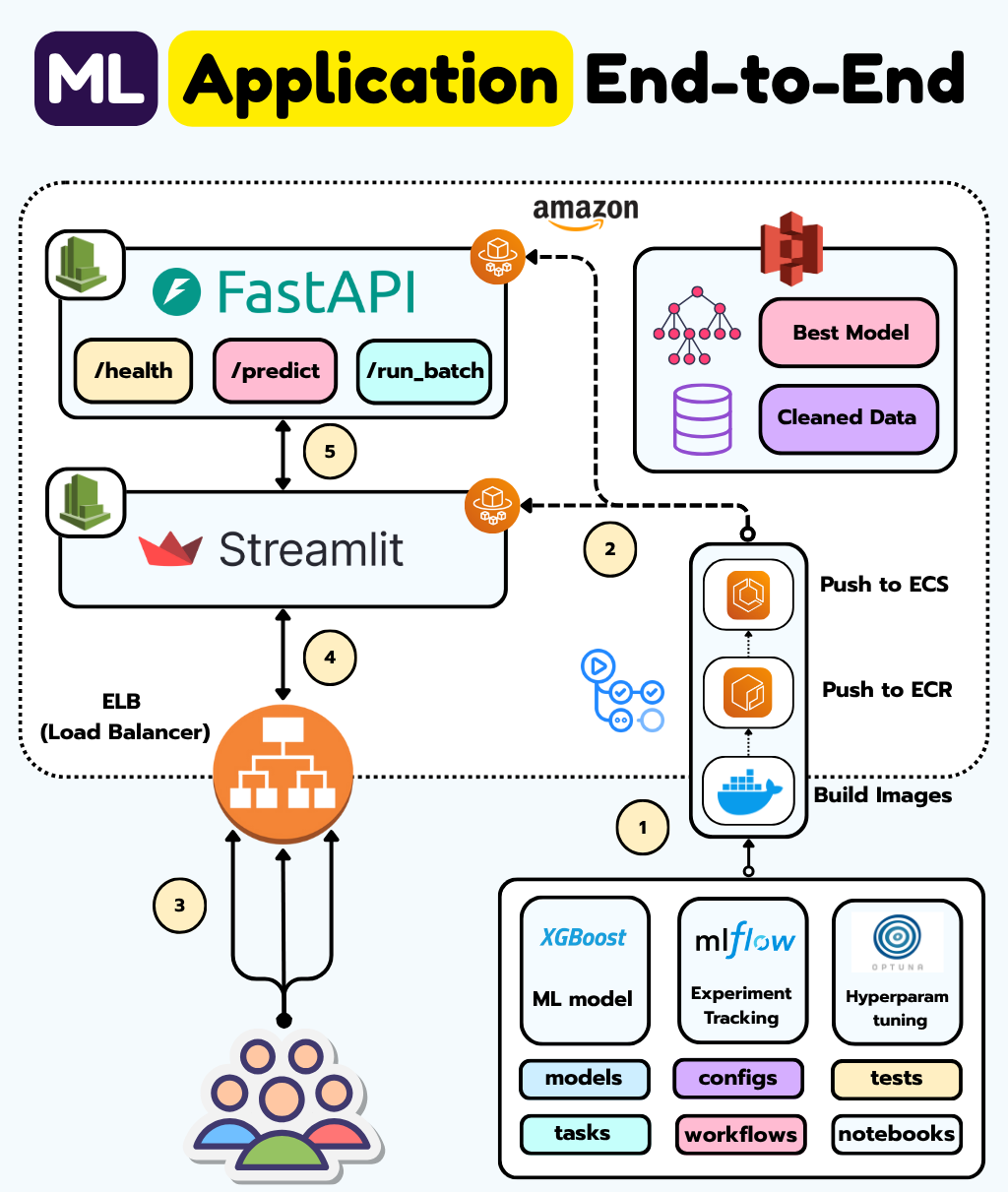

Regression End-to-End ML Pipeline

An end-to-end machine learning project focused on building and deploying a regression model for House Price prediction. This project covers the entire ML lifecycle, from data loading and preprocessing to model training, hyperparameter tuning, evaluation, containerization, CI/CD, and deployment on AWS.

Data Pipelines : Implemented robust data loading, preprocessing (cleaning, quality checks with Great Expectations), and feature engineering (transformations, encoding).

** Model Optimization **: Utilized Optuna for hyperparameter tuning and defined evaluation metrics like Bert score, BLEU/ROUGE, and cross-encoder similarity.

MLOps Practices : Integrated MLFlow for experiment tracking and set up feature, training, and inference pipelines.

Containerization & CI/CD : Leveraged Docker for reproducibility and GitHub Actions for automated deployment to AWS.

AWS Deployment : Deployed the model as a production API using FastAPI and AWS ECS, with traffic managed by an Application Load Balinder.

Frontend : Developed a user interface using Streamlit for easy access to the model and results.

Cost-Effective : The entire lab costs less than $5 and is largely free on AWS Free Tier credits.